To Be Human Has Been to Know the Torment of Hunger

When the Lamb opened the third seal, I heard the voice of a living creature say, “Come!” As I looked, there was a black horse, and its rider’s name was Famine. Hades followed along behind him. They were given authority over a quarter of the earth, to kill with hunger.

~ Revelation 6: 7-8

There was once a man who dreamed that through science, we could make a world where no one would ever perish from hunger. And famine would be no more. He gave us a treasure to fulfil that promise, but he faced a terrible choice at a moment of reckoning. To lie about science and live, or to tell the truth and face certain death.

On the Origin of Species

For the first couple of hundred thousand years that we were human, we were wanderers living beneath the stars. We gathered plant life and hunted animals until about ten or twelve thousand years ago when our ancestors invented a new way to live. Think of those geniuses who were the first to realize that inside the plants they foraged, was a means to make another plant: a seed.

Our ancestors could continue wandering in small bands or settle down to grow and raise their food. This required sacrifices for rewards that would not come until much later. For the first time, we were thinking about the future.

Of course, these decisions weren’t instantaneous. They unfolded over generations. That seems like a long time ago in human terms, but in the great sweep of cosmic time, it was less than half a minute ago. For the first time, the wanderers were settling down and building things to last for more than a single season. They dared to touch the future.

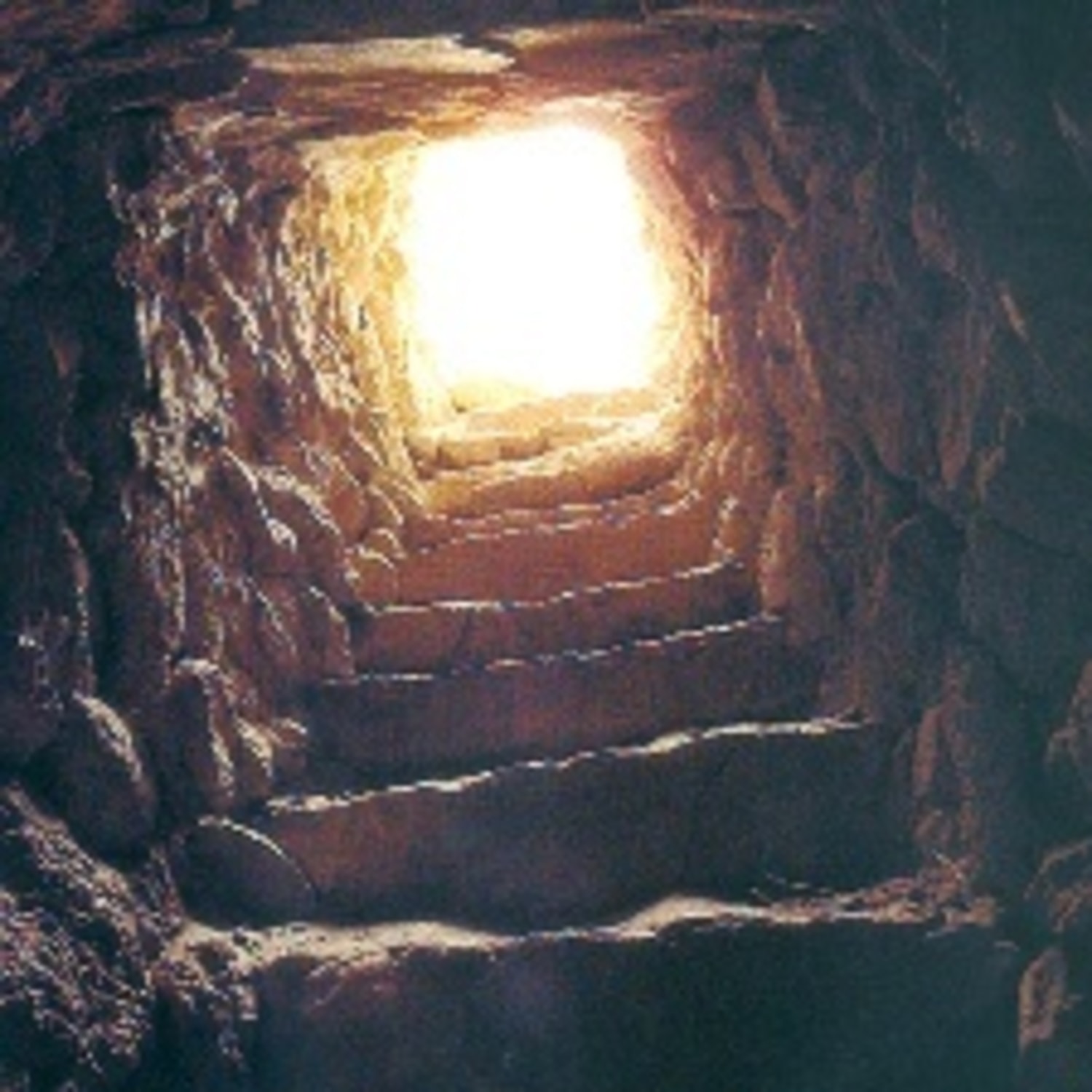

Was it a watchtower for protecting the city from invaders? Or just a way to get closer to the stars? It took 11,000 work days to build. Something that could only be possible with the food surpluses that agriculture provided. It was already 5,000 years old before the first Egyptian pyramid was built. To climb it is to follow in the footsteps of 300 generations. Isn’t it astonishing that people who had barely ceased wandering, were able to create something of such permanence?

The rich and varied hunter-gatherer diet, of plants, insects, birds and other animals, was replaced. City dwellers largely subsisted on a few carbohydrate crops. And when the rains didn’t come or a fungus afflicted the grain, there was hunger on a massive scale: famine.

- Famines caused by drought and British colonial mismanagement in India in the 18th century killed ten million people.

- In China, during the famines of the 19th century, more than 100 million people perished.

- The great hunger in Ireland, also a result of British imperial policy, starved a million to death and forced another two million to flee the country in search of a living.

- The Brazilian drought and pestilence of 1877 was comparable. In a single province, more than half died of starvation.

- The dead remain uncounted from the famines that wracked Ethiopia, Sudan and the Sahel in Africa.

For a couple of thousand years, ever since records were kept, somewhere on Earth, people in great numbers have starved.

Could agriculture become a science with a predictive theory as reliable as gravity? One that could consistently produce breeds able to stand up to drought and disease? Farmers and herders knew the advantages of preferentially selecting the hardiest specimens for crossbreeding, to produce a more successful hybrid. This was known as artificial selection. However the mechanism of how those traits were passed on to succeeding generations remained a complete mystery.

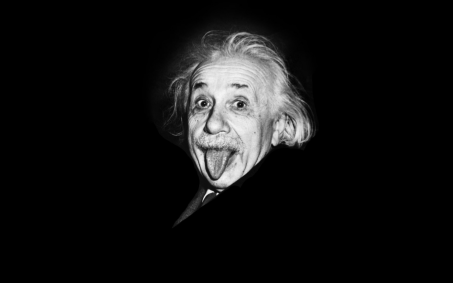

Charles Darwin discovered that species, including ours, evolve over time through a process of natural selection. The environment rewards those best adapted to its changing realities with survival and with new generations of offspring. Darwin demystified the external reality of life, but no one had any idea what the inner mechanisms of evolution were.

At that same moment, an abbot at a rural monastery in what is now the Czech Republic was trying to become a science professor.

In his spare time, Mendel took up the study of pea plants. He bred tens of thousands of them, carefully scrutinizing the height, shape, and colour of their pods, their seeds and flowers. He was searching for a predictive theory of breeding. So that you could know in advance exactly what you would get when you crossed a tall plant with a short one and a green pea with a yellow one. Mendel found that you would get a yellow pea every time. We didn’t have a word for the power of the yellow over the green until Mendel coined it. He called that quality “dominant.” And to his delight, he found that he could predict what would happen in the next generation of peas after that. One in four pea plants would be green. Mendel named the hidden trait that popped up in the next crop, “recessive.” There was something he called “factors,” hidden inside the plants that caused particular characteristics. And they operated by a law that Mendel could describe with a simple equation, like gravity.

Charles Darwin and Gregor Mendel didn’t know it, but the two scientists were deciphering the mysterious workings of life at the very same moment:

- Darwin presented the evidence for a oneness with all life. That despite our pretensions to a mystical higher birth, we were actually relatives to the other beasts and vegetables. As much a part of the natural world as any other living thing.

- Mendel discovered that there were laws governing the way life’s messages were passed on. The substitute teacher had invented a whole new field of science. But nobody noticed for 35 years. He died never knowing that the world would come to see him as a giant in the history of science.

Mendel’s work was resurrected at the beginning of the 20th century and he had no more vigorous defender than the British zoologist William Bateson. It was Bateson who named the new field of science devoted to studying these factors: genetics.

Bateson and his colleagues worked on developing new breeds of plants and animals. He believed that science and freedom were indivisible and that’s how he ran his laboratory.

Winter is Coming

The idea that our planet is a single organism, a unity, has a ring of hollow sentimentality for many people. But it’s just a scientific fact.

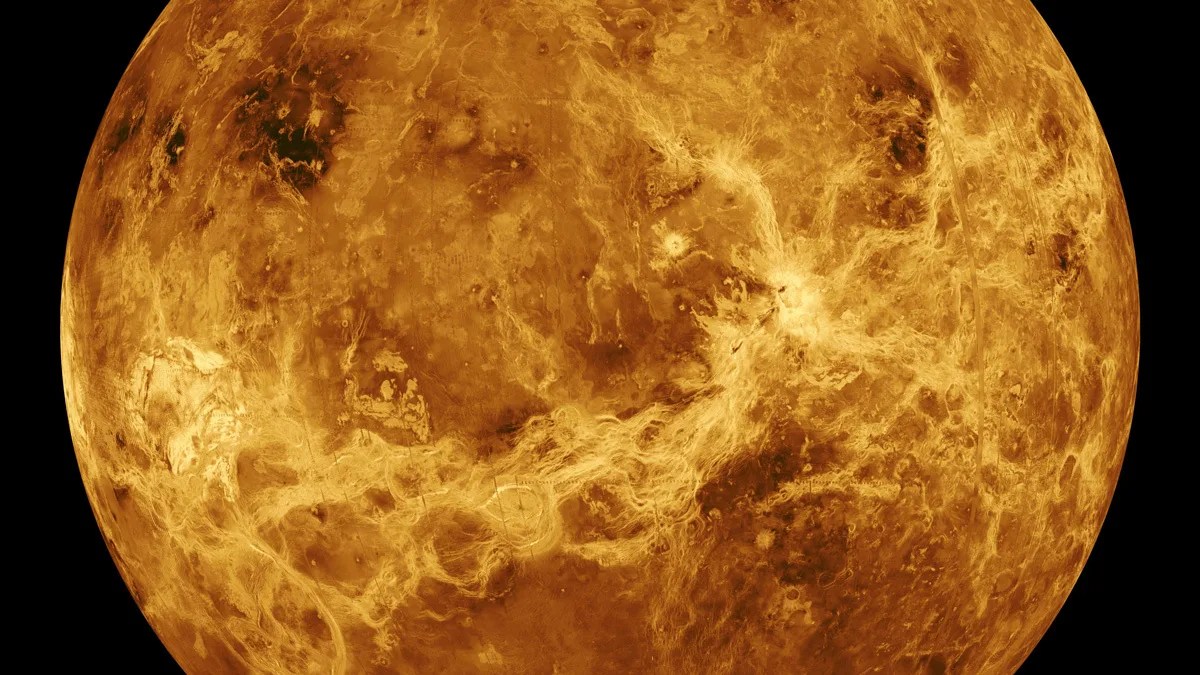

Something is about to happen, in remote Southern Peru, in the year 1600 on February 19th at 5:00 pm. Unsuspecting multitudes and distant foreign capitals will never know how this event reached around the world to torment and kill them.

Winter is coming. Volcanic winter.

For the people of Russia, it brought the worst winter in six centuries. For two years, even the summer temperatures would fall below freezing at night. Two million people, a third of Russia’s population, would die from the resulting famine. It led to the downfall of Tsar Boris Godunov, all because of a volcano that erupted 13,000 kilometres away in Peru.

But that was not the last famine in Russian history. Drought and famines occur frequently. But it wasn’t until nearly three centuries later, in 1891, that the magnitude of suffering was again as ghastly. Winter came early that year and the crops failed. Tsar Alexander III was slow to respond. Wealthy Russian merchants continued to export grain at a profit even as millions went hungry. All the Tsar had to offer his starving subjects was “famine bread”, a miserable mixture of moss, weeds, bark and husks. Half a million Russians perished, while the aristocracy and the wealthy feasted on fresh strawberries from the south of France and clotted cream from England.

The Russian Revolution would not explode for another 30 years, but many historians believe that this famine was the spark that ignited the long fuse. It was to make a lasting impression on the hero of our story, Nikolai Ivanovich Vavilov. His parents were born into poverty but had worked their way up into the upper middle class. All four of the children would grow up to become scientists. Sergey became a physicist. Nikolai grew up to be a botanist.

In 1911, Russia was the largest grain exporter on Earth, although its farming methods were antiquated. The Petrovsky Institute was the only place in Russia where scientists could hope to modernize food production through the new science of genetics. But it was still a matter of controversy:

Liliya Rodina: Debate topic for today, is plant selection a science or not?

Dimitry Ivanov: It is not a science. A farmer knows best. He's been sowing the larger seeds and crossbreeding the fattest animals for thousands of years. What do we scientists have to offer them? Except fancy equations to confuse the peasants. They don't want that. They want bread.

Nikolai Vavilov: The farmer has wisdom and is worthy of our respect. But tragically, he lacks the predictive powers of science. They cannot foretell which traits will dominate or which will be recessive. The farmer plays roulette and he's about as successful as the average gambler. Gregor Mendel made it possible for him to know the odds. To know what number the ball will land on. The moment Mendel expressed his ideas mathematically, agriculture became a science and our only hope to efficiently feed ourselves. And feed the world.

Up, Up, and Away

In 1914, during the First World War, Vavilov began to wonder. Where did the domesticated plants come from? Who were their ancestors? In a love letter, he wrote:

"I really believe deeply in science. It is my life and the purpose of my life. I do not hesitate to give my life even for the smallest bit of science."

The First World War revealed the deep cracks in Russian society and spurred the outbreak of revolution and civil war.

Vavilov established the first of his 400 scientific institutes where the children of peasants and labourers became scientists. All in the service of Vavilov’s dream of ending famine. In 1920, he dared to propose a new law of nature that would make him world-famous:

The same genes perform the same functions in different species of plants. This is because they share a common ancestor. To understand evolution and to guide our breeding work scientifically we must go to the oldest agricultural countries where these common ancestors may still live.

Vavilov knew that every seedling contained its species’ unique message. The content’s different, but all written in a mysterious language that would not be deciphered for decades. He wanted to preserve every phrase of life’s ancient scripture, to ensure its safe passage to the future. He was among the first to grasp the critical importance of biodiversity. Moreover, he came up with an entirely new concept: a world seed bank that he hoped would be impervious to war and natural catastrophes. There was a scientific underpinning to this humanitarian goal. If you could find the earliest living specimens of the plants we eat, you could parse its sentences and decipher life’s language. You could know how it changed over time. This decryption would make it possible to write new messages. To grow food immune to disease, fungus and insects and resistant to drought.

Vavilov would become a hunter of plants on five continents, venturing to places no scientist dared go before him.

As Vavilov risked life and limb, searching for seeds on five continents. His legend as a daredevil equalled his reputation for scientific genius.

In 1927, in what was then Abyssinia, now Ethiopia, Vavilov discovered the mother of all coffee. It was a good thing too. Because he needed to stay awake all night guarding the camp. As he waited for permission to travel into the interior, he was surprised to receive an invitation from the regent and future emperor of Ethiopia, Rastafari. Or as the world would come to know him, Haile Selassie. Vavilov later recalled:

"It was just the two of us. He questioned me with great interest about my country and its revolution. I informed him that Lenin, our founder, had died, and Joseph Stalin now ruled. I told Rastafari how Stalin's armed robbery of a bank had raised $3 million for the revolution and made him a folk hero in Russia 20 years before."

As Joseph Stalin was having all his political rivals systematically murdered, he began to slash away at the structure of Russian agriculture. In the early 1930s, his stated goal was to modernize Soviet agriculture. But the result was catastrophic. Stalin ordered the Kulaks, as the more prosperous peasants were known, to be liquidated as a class. Between five and ten million people perished of famine. But to Trofim Lysenko, this massive tragedy was an opportunity. Lysenko hated Vavilov for his knowledge and fame, and like the snake that he was; he knew exactly when to strike. And ultimately, his venom would be fatal.

"The Garden of Eden must have been somewhere near here in central Asia because this is where the first apples grow."

Vavilov travelled the world identifying the first places on Earth to bear these seeds. Collecting samples of each for safekeeping. All in all, Vavilov brought back a quarter of a million varieties of seeds. But the Russia Vavilov returned to was a different country. One in the grip of the most vicious famine it had ever known. The heady optimism of the revolution had been replaced with dread and despair.

Vavilov’s team began to sort and catalogue every precious seed. They worked tirelessly as if the life of every hungry Russian depended on them.

In Snakes Lysenko…

Lysenko: Comrade Stalin. I have something of vital importance to our nation's security to tell you. I know why the country starves and I know how to turn famine into plenty so that you may triumph over all those who seek to undermine you.

Stalin: Comrade Lysenko, you must be a very powerful man.

Lysenko: The scientists are lying to you. Charles Darwin, Gregor Mendel, Nikolai Vavilov, all liars. Comrade, why do you think the giraffe has a long neck?

Stalin: A giraffe? Why are you wasting my time? So it can nibble on leaves at the top of the trees?

Lysenko: Exactly. But the scientists say no. They believe in imaginary, invisible entities called genes that somehow get changed by equally invisible forces that tell the giraffe to have a long neck and a chicken to have a short one. I don't believe in imaginary things. But Vavilov does and that's why our people starve. While he's been off collecting souvenirs, I've been devising a way to give Mother Russia the thing she needs most - a harvest of wheat in the dead of winter.

Stalin: If this is true, if you really have this power...

Lysenko: I do, Comrade. I do. But I must have a free hand. No more interference from the bourgeois geneticists. They are agents of our enemies. They want to starve Russia into submission.

But wait, that’s not why the giraffe’s neck is long! How could Stalin have fallen for that one!? It was easy. He desperately wanted to believe it. Lysenko was peddling the long-discredited theory of an early 19th-century naturalist named Jean-Baptiste Lamarck who believed that acquired characteristics, let’s say the length of a giraffe’s neck from straining to get at those leaves up high, could be inherited by the next generation. He failed to grasp that it took millions of years of evolution and higher survival rates among the generations of giraffes with even slightly longer necks to result in a tall modern giraffe. This increasingly long neck of the giraffe was due to random mutations in the genes that happened to lead to a more successful giraffe. Not their neck stretching exertions. This had been Charles Darwin’s revolutionary insight, evolution by natural selection.

Lysenko whispered in Stalin’s ear that he could fulfil a centuries-old Russian dream and end the famine that threatened Stalin’s grip on the country. Lysenko would soak the wheat seeds in ice water so that they could still thrive in the ice and snow. A process he called vernalization. Lysenko falsely claimed that the plants’ offspring would inherit the resistance to cold. No time-consuming painstaking crossbreeding required. Only one thing stood in his way – Vavilov and his stubborn adherence to genetics.

The bitter irony was that while Lysenko was spinning fantasies of abundance for Stalin, Vavilov and his team were crossing wheat species from higher altitudes that actually would’ve resulted in heightened food production in Russia.

Liliya Rodina: Nikolai Ivanovich, I tell you, we are in the gravest danger. Three days ago, the secret police came for Yevgeny and Leonid. No one has heard from them since. Their wives are frantic. Lysenko takes every opportunity to blame you for the famine. I tell you, we must stop the experiments in genetics.

Vavilov: Carry on quietly with your work. No matter what happens. We must hurry. We must be like Michael Faraday, working hard and keeping accurate notes of the results. If I disappear, then you must take my place. The only thing that matters is getting the science right. It is the only hope of ending this famine and all the others to come.

Rodina: Comrade, they're going to arrest you and all of us. Then we better work that much faster.

Stalin’s zeal to push the kulak peasants off their farms and into factories had become a policy of genocide.

A Botanist Witchhunt

Lysenko: Vavilov and his geneticists continue to speak against you. I cannot bear it.

Stalin knew that getting rid of Vavilov might be trouble. The global scientific community admired Vavilov for his ideas and his courage. They had even been willing to move their international genetics congress to Moscow when Stalin wouldn’t let him travel outside the country.

Stalin: Discredit Vavilov first, then you can do with him as you wish.

Trofim Lysenko now mounted a relentless campaign against Vavilov and science. It came to a head at a two-day conference. All the scientists and enemies of science gathered to debate the future of Soviet agricultural policy.

Vavilov: I regret to report that the biochemists are not yet able to distinguish the lentil from the pea by analyzing their proteins.

Lysenko: I reckon that anyone who tries them on their tongue can tell a lentil from a pea.

Vavilov: Comrade, we are unable to distinguish them chemically.

Lysenko: What's the point of being able to distinguish them chemically if you can try them on your tongue? And so we shall soon see how my method of soaking seeds of all kinds in ice water shall lead to a better-fed Motherland.

Vavilov: What? No experiments? No data?

Lysenko: Perhaps you haven't noticed. Your ranks are thinning. Vernalization is going to provide a huge winter harvest. You are either with our plan or...

Vavilov: You can take me to the stake. You can set me on fire. But you can't make me lie about science!

The crowd had just witnessed a man committing suicide. It would be the last time Nikolai Vavilov was publicly seen.

In preparation for what was coming, he had warned his colleagues that they must ask for transfers to other institutes to save themselves. His closest colleagues valiantly refused to distance themselves from Vavilov.

Nikolai Vavilov was arrested and questioned, but he maintained his innocence. However, Vavilov’s tormentor had plenty of experience softening up such stubborn subjects. He started interrogating Vavilov for ten, to 12 hours at a time. Usually rousing him out of his bed in the middle of the night. He must’ve been tortured, because his legs were so swollen, he was unable to walk. Vavilov would be dragged back to his cell and crawl to a place on the floor and just lie there, unable to move. It kept on for 1,700 hours, more than 400 sessions. Until Vavilov finally broke. A year after his arrest, he was sentenced to be shot. He was taken to the place of his execution where he languished for months.

The Siege of Leningrad

Just when things couldn’t get any darker, an even greater darkness descended.

The siege of Leningrad was, by any metric, the most ghastly of all.

Ivanov: We don't even know if he's alive.

Rodina: Until we do, we must find it within ourselves to do as Nikolai Ivanovich would. If the siege lasts, our fellow citizens will get very hungry. This building contains several tons of edible material. We must figure out how to protect every last seed for the time when the world returns to its senses.

In all of history, no team of scientists has ever been tested so cruelly. They were pushed beyond the breaking point and yet they did not break.

On Christmas Day alone in 1941, 4,000 people starved to death in Leningrad. The city had been under siege by Hitler’s army for more than 100 days. The temperature was -40oC and the city’s entire infrastructure had collapsed. It was only a matter of time, Hitler thought to himself, before Leningrad succumbed to his will. No city could endure such suffering for very long. While Stalin fretted over the safety of the artworks at the Hermitage Museum, he never gave a single thought to Vavilov’s seed bank. But Hitler had already taken the Louvre art museum of Paris. He coveted something much more precious – Vavilov’s treasure. Hitler had established a special tactical unit of the SS, Russland-Sammelcommando, the Russian collector commandos, to take control of the seed bank and retrieve its living riches for future use by the Third Reich. They waited at the ready, like a pack of Dobermans, straining to be unleashed on the institute.

The botanists were now down to a ration of two slices of bread per day. But still, they continued their work. In a way, the German army was the least of their worries. Rats and vermin became a constant bother.

Alexander Stchukin: If only Vavilov were here. I feel so lost without him.

Rodina: Dear comrade, as painful as it is, we must accept that he's gone forever.

But Vavilov was alive… barely. He had been moved to another prison in another city. In a final attempt for freedom, he wrote to the state:

"I am 54 years old, with vast experience and knowledge in the field of plant breeding. I would be happy to devote myself entirely to the service of my country. I request and beg you to allow me to work in my special field, even at the lowest level."

But no answer ever came. The state had decided not to shoot him. They had a crueller fate in mind for the man who did more than any other to eliminate famine and hunger – he would be deliberately and slowly starved to death.

800,000 other human beings had starved to death in Leningrad. Besieged by the German forces from September 1941 until January 1944, the city somehow still managed to hold out against the relentless assault. The meagre rations of two slices of bread a day had run out long before, and the protectors of Vavilov’s treasure began to succumb to hunger amidst the plenty that their sacred honour prevented them from consuming:

- Botanist Alexander Stchukin, specialist in groundnuts.

- Liliya Rodina, an expert on oats.

- Dimitry Ivanov, a world authority on rice.

The botanists perished from hunger, and yet not a grain of rice in the collection was unaccounted for.

Slaying the Horseman

And what of Vavilov’s nemesis, Trofim Lysenko? He maintained his death grip on Soviet agriculture and biology for another two decades until three of Russia’s most distinguished scientists publicly denounced him for his pseudoscience and his other crimes.

After Stalin’s death and the recognition of the damage he and Lysenko had done to the Soviet Union, Nikolai Vavilov could once again be talked about in public. The Institute of Plant Industry was renamed after him, and it still thrives.

So why didn’t the botanists at the institute eat a single grain of rice? Why didn’t they distribute the seeds, nuts and potatoes to the people of Leningrad who were dying of starvation every day for more than two years?

Did you eat today? If the answer is yes, then you probably ate something that descended from the seeds that the botanists died to protect. They gave their lives for us.

If only our future was as real and precious to us as it was to them.

English

English French

French German

German

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/filer/7e/59/7e593e43-c61b-4635-9fac-8a6cd1a36ed3/accretion-nature.jpg)